AI Engineer Europe 2026 - day 2

By Judith van Stegeren

AI Engineer Europe 2026 took place 8 april - 10 april 2026 in London. It was the first event of ai.engineer in Europe. This is a conference report of day 2.

- AI Engineer Europe 2026 - day 1.

- AI Engineer Europe 2026 - day 3 blogpost is coming soon!

Keynotes

What is an AI engineer anyway?

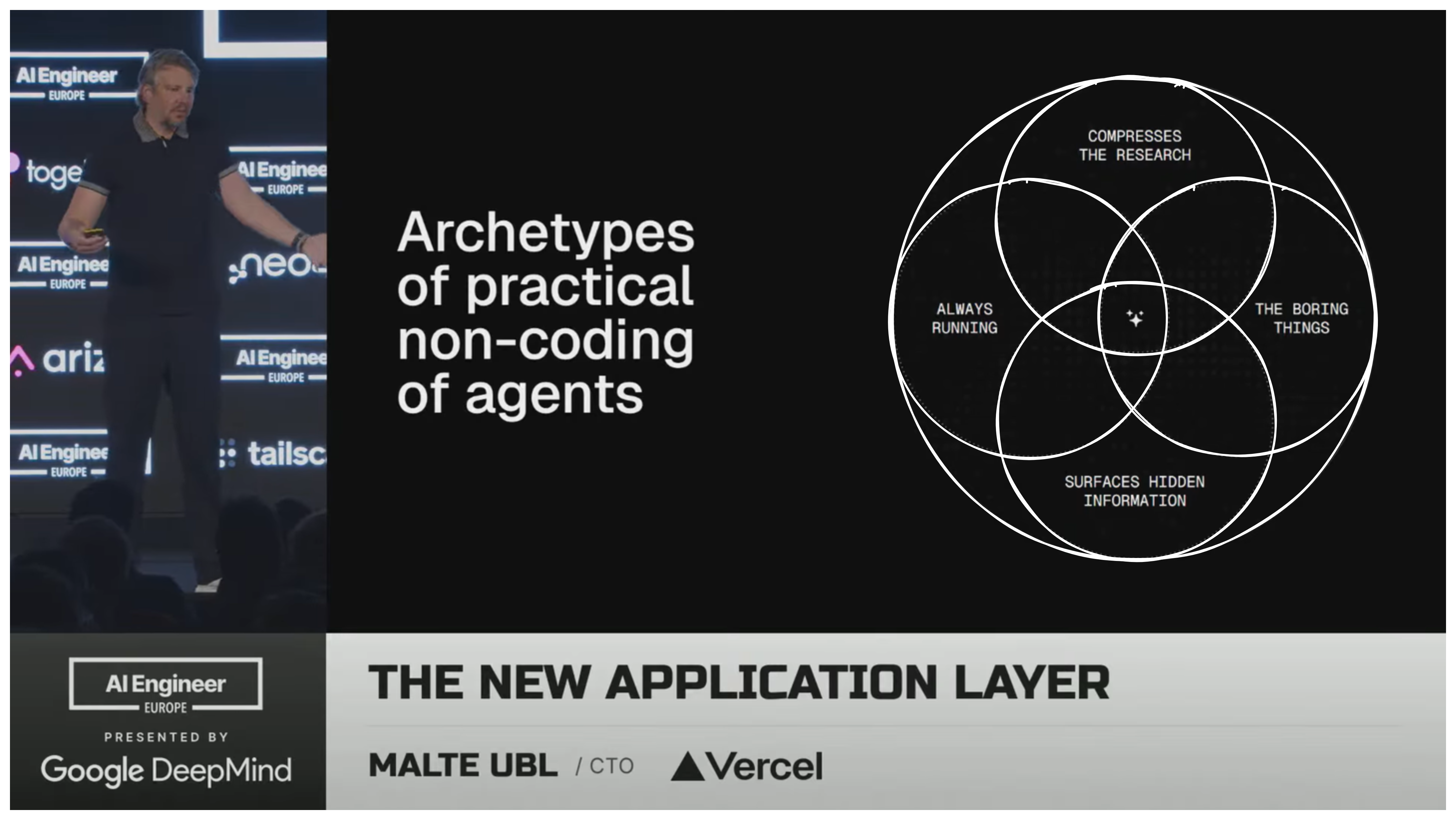

I was fascinated by this quote from Malte Ubl (Vercel): "In many ways, AI engineering is the legitimate successor of web development, as a really mainstream discipline of engineering that will shape the next decade of software development." This is very different from my own definition of "AI engineering", so the term "AI engineer" is more subjective and overloaded than I thought. Does it mean "engineer specialized in machine learning", or does it mean "engineer that uses AI for coding"?

There was also a very nice moment when the slide deck showed an accidental datakami logo. :D

Deepmind research highlights

Raia Hadsell from Google Deepmind gave a keynote with a research overview. She talked about the "problems worth solving" that Deepmind is trying to tackle using generative AI. This reminded me of mathematician Richard Hamming's plea to take a moment every week to Think Great Thoughts and work on important problems. I especially liked hearing about Deepmind's models for weather forecasts and predicting cyclone paths.

Hadsell talked about "Jennifer Aniston cells", a concept from neuroscience. These are combinations of cells that only activate for a specific person or concept, regardless of modality. They help with rapid recognition, information retrieval, and comparisons. Useful for humans -- and maybe for neural networks? This concept was also integrated in the new multi-modal Gemini embeddings 2 (gemini-embeddings-002) which has a unified multi-modal latent space. I've experimented with Gemini embeddings 2 in the past month and they perform worse on text inputs than Gemini embeddings 1 -- but they should perform better than existing models on multi-modal inputs.

Other interesting mentions were MuJoCo, an advanced physics engine, and she showed a demo of Genie 3, a new general-purpose world model.

OpenAI's token billionaires

The next keynote was by Ryan Lopopolo (OpenAI), who has written my favorite blogpost about AI legibility of code bases.

He enthusiastically opened with "I am a token billionaire". Burning lots of tokens has become a proxy for being innovative, but I'm not a fan of this framing. I can think of many ways to burn tokens without any real purpose: from lazy context management to generating random strings. Using the amount of tokens burned burn as a proxy for innovation incentivizes "productivity theater" -- the AI equivalent of Bruce Schneier's security theater.

One thing I liked about this keynote was a bootstrapping method Lopopolo showed. If we assume that agents should be able to do every aspect of human (engineering) work, almost every text should be considered a prompt! Documentation, error messages, contracts, meeting notes, review comments, etc. You can take the prompting guide for your model of choice from the official docs, create a “create-prompt” Skill from that, and use that for creating any text that will be used downstream by coding agents. Handy for making sure your code outputs error messages prompts that agents can work with. And naturally, the engineers at OpenAI are working with GPT-5.4.

Peter Steinberger (OpenClaw, now OpenAI) gave a keynote that was mostly a rebuttal against OpenClaw critics. To illustrate how swamped with security reports the OpenClaw maintainers are, he said "Our AGENTS.md is just "don't submit X as security issue!"" In practice, that doesn't seem to be the case. If you want some inspiration for managing a firehose of agentic contributions for your website, code or project, I think the OpenClaw repository is a good place to start.

Evals track

After the keynotes I joined the room where all the talks about evals were scheduled. We do a lot of evals for Datakami clients, so I was curious to hear about new developments.

Building eval platforms

Phillip Hetzel (Braintrust) talked about evals as a tool for experiments. He presented Braintrust as platform for non-tech users, where they can easily change bits of prompts and configs of LLM workflows, and run this as an experiment. I view evals more as a tool for monitoring and verification, but that might be my data science background. If you have an approach for doing evals that can be used by agents, you can certainly use them for Karpathy's auto-research approach and hillclimb to a better implementation.

Hetzel discussed the challenges of building an eval platform: "building an eval platform is not a UI problem, but a systems engineering problem." Typical technologies for building platforms are not powerful enough for the requirements of eval platforms, e.g. dealing with large volumes of traces, storing heterogenous data, and storing large AI inputs as blobs. This is in line with my experiences with Langfuse, which switched over to Clickhouse for trace storage in 2024, which was a big improvement.

At the conference, I got the feeling that most eval platforms originated at observability tool startups, or vice versa. There's a similar pattern in startups that are building prompt management tools or Skill registries. Braintrust started as an eval platform, but when their customers started piping production data to their eval platform, so they added tracing/observability functionality.

Offload knowledge, keep the reasoning capabilities

Maxime Labonne (Liquid AI) gave a really good talk about optimizing small LLMs for running on edge devices such as mobile phones. Specifically, they tried to scale down the embeddings layer of open source models (e.g. Gemma 3 from 60% of the total model to only 19%), so there's more room available for the other model layers. This leads to an upgrade on speed and "smarts" with the same memory footprint.

Maxime mentioned the Chinchilla scaling laws, which say that to train an optimal model, we need around 20 text tokens of training data per model parameter.

This talk also had an interesting bit about "doom-looping", i.e. when an LLM starts to repeat output tokens and cannot recover from this. This doomlooping got in the way of performing evals. Maxime shared a neat reinforcement learning trick that they used during post-training to discourage this behaviour in the LFM2.5-1.2B-Thinking model. They combined this with an n-gram repetition penalty to stamp out doomlooping: from 15.74% to 0.36% of eval cases.

The model under discussion (the LFM2.5 family?) was optimized for data extraction and tool use. Because these models are supposed to run on devices, there's a strict limit on the memory that's available to the model. If you shrink the model dramatically, you have to reduce the number of parameters, which is basically the model's 'memory', i.e. the storage for patterns it learned during training. This means: less room for storing facts, nuance, or complexity from the training data. Labonne proposes circumventing these problems with internet access or RAG, and just making sure your tiny model has really good reasoning capabilities.

Maxime's presentation had good visualizations of the various aspects of model training and the phases of post-training of LLMs. Once the talk is online, I'll post a link here. This was such an interesting talk that I want to start hacking on small models as well!

Making Langfuse more AI-friendly

Marc Klingen (Langfuse) talked about how they're changing the Langfuse product to be more agent-friendly. That includes writing their docs for agents, not just humans, and providing Skills for common usage patterns. The Langfuse team instrumented their own Claude Codes so they could see what the agents were doing with Langfuse.

One of the problems they've encountered is that old Langfuse documentation is part of the training data of LLMs, which confuses AI agents, as they try to use functionality that has disappeared or changed.

Langfuse recently released a cli. However, agents tend to hallucinate command line flags, just because there are so many cli tools in their training data. A nice solution to this: force agents (with skills or AGENTS.md) to always call the langfuse cli with the --help flag first, so there's little chance of the agents hallucinating command line flags that don't exist (anymore).

The Langfuse team changed the website in various ways to be more agent-friendly. The Langfuse website serves .md files by default for agents, so you don't accidentally burn unnecessary tokens on ingesting HTML. And there's a special endpoints for asking questions about the docs. Inputs are sent to a logged RAG service. This way, agents dont have to process multiple pages to get a short answer to a straightforward question, and the Langfuse team gets feedback about what users/agents are asking. Win-win!

A final take-away: if you try to do “autoresearch” (letting an agent hillclimb a problem with an automated feedback loop), be careful what you wish for. Example: the Langfuse team tried to minimize the number of turns when optimizing an LLM workflow. This request led the agent to remove LLM conversation turns meant for getting up-to-date documentation. Long story short: it's easy to accidentally optimize yourself into new bugs.

Afternoon keynotes

Interview with Gergely Orosz

Organizer swyx interviewed Gergely Orosz, who writes The Pragmatic Engineer newsletter. The down-to-earth responses by Orosz were a wonderful change from the AI hype-sters ("AGI pilled", "Clawdboys", etc.) that were vocally present at the conference. If you rewatch one talk of this day, watch this interview.

They talked about the new culture of "token maxing", or judging the productivity of engineers by the amount of LLM tokens they're using, and whether this is a good or bad development. Orosz: "Like any data point, it can be weaponized".

Orosz also reflected on how engineering roles will change now that we have coding agents. Is managing agents the same as managing humans? Agent managing is indeed orchestration, but not similar to people management: fewer social problems and faster feedback loops.

And why aren't we seeing a dramatic increase in productivity at large tech companies? Tech companies are not shipping more features, but they're rebuilding all their internals to be more AI native. They're rebuilding internal infrastructure, and building custom solutions that suit their needs better than off-the-shelf stuff. This is a low risk way of getting more hands-on experience with AI too; plus anything that uses AI currently gets funding.

Building Skills from software engineering books

Matt Pocock (AI Hero) gave a keynote about classical software engineering books, and how you can use them to build new Claude Code workflows and Skills. He encouraged the audience to build a shared understanding with your coding agent before generating any code.

"My Skill /grill-me is better than Claude’s plan mode". Pocock showed a Skill that lets the LLM ask 40-100 questions to clarify the problem before building anything. I anticipate similar problems as with the demand-driven concept approach, because one LLM can ask more questions than 1000 wise engineers can answer. However, I still prefer "thoughtful software design in collaboration with agents" to "blindly vibecoding all the things".

Final thoughts

During the conference, it stood out to me that very few people were talking about the downsides of using agents for everything: hallucinations, privacy, the impact of automated decisions for everything. Most of the discourse is about scaling up (any) human activity and moving as fast as possible. For example, an engineering manager at a large tech company said he had already automated all performance reviews with his team members by transcribing all his meetings with AI, and asking an AI agent to evaluate them based on the transcripts.

It was encouraging that some power-users of agents do seem to be thinking about operational security, although I think that's because we've recently seen plenty of examples of how LLMs and agentic systems can be destructive at scale. Either by design or by accident.

Read about AI Engineer Europe 2026 - day 1.

AI Engineer Europe 2026 - day 3 blogpost coming soon!

More like this

Subscribe to our newsletter "Creative Bot Bulletin" to receive more of our writing in your inbox. We only write articles that we would like to read ourselves.